Haroon Ashraf aims to square the circle:

Data modeling is the way you can arrange and link your organizational data (typically in the form of tables) for reporting and analysis.

In other words, it is the strategy of lining tables with each other to get useful information by following the standard practices and domain knowledge of the organization.

Traditionally, it stands for implementing the star or snowflake schema from the perspective of the data warehouse BI solution.

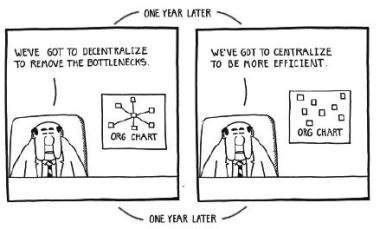

What is Centralized Data Modeling?

Centralized data modeling means a generic data model consisting of some commonly used tables, relationships, and hierarchies that are shared across the organization. These elements the starting point for Power BI report development to anyone eligible, interested, and capable to do so.

With that in mind, read on to learn how you can use Power BI templates to bring this about. I joke about squaring the circle here because if you treat Power BI as a self-service business intelligence tool, the users may not be totally familiar with what you’re doing and could end up accidentally undermining your plans. That said, it’s a good approach to solving this common problem.